|

|

Ensure you are familiar with the with the OCSS7 architecture before going further. In particular, ensure you have read the following: |

Network planning

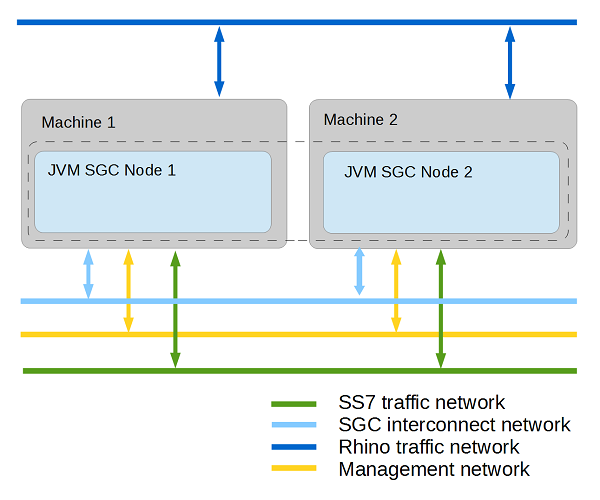

When planning OCSS7 deployment, OpenCloud recommends preparing IP subnets that logically separate different kinds of traffic:

| Subnet | Description |

|---|---|

SS7 network |

dedicated for incoming/outgoing SIGTRAN traffic; should provide access to the operator’s SS7 network |

SGC interconnect network |

internal SGC cluster network with failover support (provided by interface bonding mechanism); used by Hazelcast and communication switch |

Rhino traffic network |

used for traffic exchanged between SGC and Rhino nodes |

Management network |

dedicated for managing tools and interfaces (JMX, HTTP) |

SGC Stack network communication overview

The SS7 SGC uses multiple logical communication channels that can be separated into two broad categories:

-

SGC directly managed connections — connections established directly by SGC subsystems, configured as part of the SGC cluster-managed configuration

-

Hazelcast managed connections — connections established by Hazelcast, configured as part of static SGC instance configuration.

SGC directly managed connections

The following table describes network connections managed directly by the SGC configuration.

| Protocol | Subsystem | Subnet | Defined by | Usage | |

|---|---|---|---|---|---|

TCP |

TCAP |

Rhino traffic network |

|

Used in the first phase of communication establishment between the TCAP Stack (CGIN RA) and the SGC cluster. The communication channel is established during startup of the TCAP Stack (CGIN RA activation), and closed after a single HTTP request / response. |

|

|

Used in the second phase of communication establishment between the TCAP Stack (CGIN RA) and the SGC cluster. The communication channel is established and kept open until either the SGC Node or the TCAP Stack (CGIN RA) is shutdown (deactivated). This connection is used to exchange TCAP messages between the SGC Node and the TCAP Stack using a custom protocol. The level of expected traffic is directly related to the number of expected SCCP messages originated and destined for the SSN represented by the connected TCAP Stack.

|

||||

SGC interconnect network |

|

Used by the communication switch (inter-node message transfer module) to exchange message traffic between nodes of the SGC cluster. The communication channel is established between nodes of the SGC cluster during startup, and kept open until the node is shut down. During startup, the node establishes connections to all other nodes that are already part of the SGC cluster. The level of expected traffic depends on the deployment model, and can vary anywhere between none and all traffic destined and originated by the SGC cluster.

|

|||

SCTP |

M3UA |

SS7 Network |

|

Used by SGC nodes to exchange M3UA traffic with Signalling Gateways and/or Application Servers. The communication channel lifecycle depends directly on the SGC cluster configuration; that is, the enabled attribute of the connection configuration object and the state of the remote system with which SGC is to communicate. The level of traffic should be assessed based on business requirements. |

|

JMX over TCP |

Configuration |

Management network |

Used for managing the SGC cluster. Established by the management client Command-Line Management Console, for the duration of the management session. The level of traffic is negligible. |

Hazelcast managed connections

Hazelcast uses a two-phase cluster-join procedure:

-

Discover other nodes that are part of the same cluster.

-

Establish one-to-one communication with each node found.

Depending on the configuration, the first step of the cluster-join procedure can be based either on UDP multicast or direct TCP connections. In the latter case, the Hazelcast configuration must contain the IP address of at least one other node in the cluster. Connections established in the second phase always use direct TCP connections established between all the nodes in the Hazelcast cluster.

Traffic exchanged over SGC interconnect network by Hazelcast connections is mainly related to:

-

SGC runtime state changes

-

SGC configuration state changes

-

Hazelcast heartbeat messages.

During normal SGC cluster operation, the amount of traffic is negligible and consists mainly of messages distributing SGC statistics updates.

Inter-node message transfer

The communication switch (inter-node message transfer module) is responsible for transferring data traffic messages between nodes of the SGC cluster. After the initial handshake message exchange, the communication switch does not originate any network communication by itself. It is driven by requests of the TCAP or M3UA layers.

Usage of the communication switch involves additional message-processing overhead, consisting of:

-

CPU processing time to encode and later decode the message — this overhead is negligible

-

network latency to transfer the message between nodes of the SGC cluster — overhead depends on the type and layout of the physical network between communicating SGC nodes.

This overhead is unnecessary in normal SGC cluster operation, and can be avoided during deployment-model planning.

Below are outlines of scenarios involving communication switch usage: Outgoing message inter-node transfer and Incoming message inter-node transfer; followed by tips for Avoiding communication switch overhead.

Outgoing message inter-node transfer

A message that is originated by the TCAP stack (CGIN RA) is sent over the TCP-based data-transfer connection to the SGC node (node A). It is processed within that node up to the moment when actual bytes should be written to the SCTP connection, through which the required DPC is reachable. If the SCTP connection over which the DPC is reachable is established on a different SGC node (node B), then the communication switch is used. The outgoing message is transferred, using the communication switch, to the node where the SCTP connection is established (transferred from node A to node B). After the message is received on the destination node (node B) it is transferred over the locally established SCTP connection.

Incoming message inter-node transfer

A message received by an M3UA connection, with a remote Signalling Gateway or other Application Server, is processed within the SGC node where the connection is established (node A). If the processed message is a TCAP message addressed to a SSN available within the SGC cluster, the processing node is responsible for selection of a TCAP Stack (CGIN RA) corresponding to that SSN. The TCAP Stack (CGIN RA) selection process gives preference to TCAP Stacks (CGIN RAs) that are directly connected to the SGC node which is processing the incoming message. If a suitable locally connected TCAP Stack (CGIN RA) is not available, then a TCAP stack connected to another SGC node (node B) in the SGC cluster is selected. After the selection process is finished, the incoming TCAP message is sent either directly to the TCAP Stack (locally connected TCAP Stack), or first transferred through the communication switch to the appropriate SGC node (transferred from node A to node B) and later sent by the receiving node (node B) to the TCAP Stack.

|

|

TCAP Stack (CGIN RA) selection

TCAP Stack selection is invoked for messages that start a new transaction ( TCAP Stack selection is described by following algorithm:

|

Avoiding communication switch overhead

A review of the preceding communication-switch usage scenarios suggests a set of rules for deployment, to help avoid communication-switch overhead during normal SGC cluster operation.

| Scenario | Avoidance Rule | Configuration Recommendation | ||

|---|---|---|---|---|

If an SSN is available within the SGC cluster, at least one TCAP Stack serving that particular SSN must be connected to each SGC node in the cluster. |

The number of TCAP Stacks (CGIN RAs) serving a particular SSN should be at least the number of SGC nodes in the cluster.

|

|||

If the SGC Stack is to communicate with a remote PC (another node in the SS7 network), that PC must be reachable through an M3UA connection established locally on each node in the SGC cluster. |

When configuring remote PC availability within the SGC Cluster, the PC must be reachable through at least one connection on each SGC node. |

SGC cluster membership and split-brain scenario

The SS7 SGC Stack is a distributed system. It is designed to run across multiple computers connected across an IP network. The set of connected computers running SGC is known as a cluster. The SS7 SGC Stack cluster is managed as a single system image. SGC Stack clustering uses an n-way, active-cluster architecture, where all the nodes are fully active (as opposed to an active-standby design, which employs a live but inactive node that takes over if needed).

SGC cluster membership state is determined by Hazelcast based on network reachability of nodes in the cluster. Nodes can become isolated from each other if some networking failure causes a network segmentation. This carries the risk of a "split brain" scenario, where nodes on both sides of the segment act independently, assuming nodes on the other segment have failed. The responsibility of avoiding a split-brain scenario depends on the availability of a redundant network connection. For this reason, network interface bonding MUST be employed to serve connections established by Hazelcast.

Usage of a communication switch subsystem within the SGC cluster depends on the cluster membership state, which is managed by Hazelcast. Network connectivity as seen by the communication switch subsystem MUST be consistent with the cluster membership state managed by Hazelcast. To fulfil this requirement, the communication switch subsystem MUST be configured to use the same redundant network connection as Hazelcast.

|

|

Network connection redundancy delivery method

Both Hazelcast and the communication switch currently do not support network interface failover. This results in a requirement to use OS-level network interface bonding to provide a single logical network interface delivering redundant network connectivity.

|

|

|

Network Path Redundancy

The entire network path between nodes in the cluster must be redundant (including routers and switches).

|

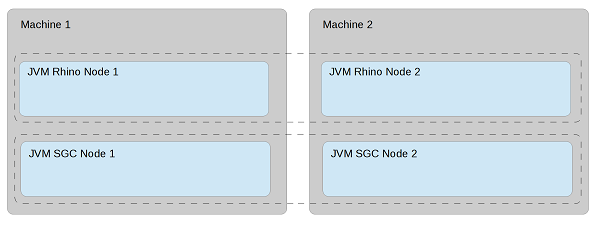

Recommended physical deployment model

In order to take full advantage of the fault-tolerant and high-availability modes supported by the OC SS7 stack, OpenCloud recommends using at least two dedicated machines with multicore CPUs and two or more Network Interface Cards.

Each SGC node should be deployed on one dedicated machine. However hardware resources can be also shared with nodes of Rhino Application Server.

|

|

The OC SS7 stack also supports less complex deployment modes which can also satisfy high-availability requirements. |

|

|

To avoid single points of failure at network and hardware levels, provide redundant connections for each kind of traffic.The SCTP protocol that SS7 traffic uses itself provides a mechanism for IP multi-homing. For other kinds of traffic, an interface-bounding mechanism should be provided. Below is an example assignment of different kinds of traffic among network interface cards on one physical machine. |

| Network Interface Card 1 | Network Interface Card 2 | |

|---|---|---|

port 1 |

SS7 IP addr 1 |

SS7 IP addr 2 |

port 2 |

SGC Interconnect IP addr (bonded) |

SGC Interconnect IP addr (bonded) |

port 3 |

Rhino IP addr |

|

port 4 |

Management IP addr |

|

|

While not required, bonding Management and Rhino traffic connections can provide better reliability. |