This page describes the scenarios and methodology used when running the benchmarks.

Benchmarks are run using two scenarios. Each scenario is run with the OCSS7 acting as the initiator and as the responder.

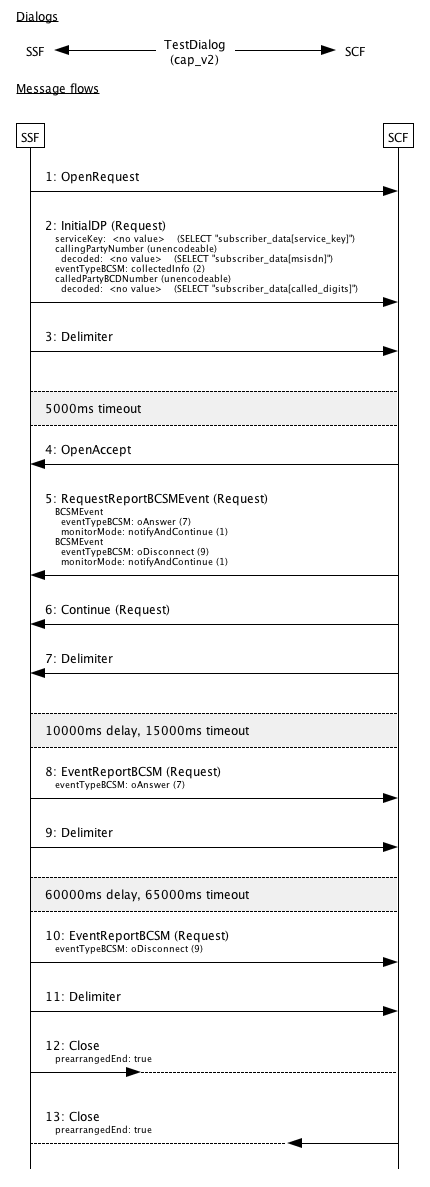

CAP call monitoring scenario

These scenarios consist of dialogs following the message flow:

-

The initiator sends a TC-BEGIN initiating a CAP v2 dialog, containing an CAP InitialDP operation invoke

-

The responder sends a TC-CONTINUE containing two operation invokes: CAP RequestReportBCSM(oAnswer,oDisconnect), and CAP Continue

-

The initiator delays for 10 seconds

-

The initiator sends a TC-CONTINUE containing a CAP EventReportBCSM(oAnswer) operation invoke

-

The initiator delays for 60 seconds

-

The initiator sends a TC-CONTINUE containing a CAP EventReportBCSM(oDisconnect) operation invoke

-

The dialog is ended with prearranged end on both the initiator and responder

OCSS7 as the Initiator

In this configuration, the test system generates load, and is the initiator of the dialog. The responder is the external support system.

Response time is measured on the test system by the scenario simulator infrastructure, from immediately before submission of the TC-BEGIN to the TCAP stack, to immediately after receipt of the TC-CONTINUE containing the CAP Continue invoke from the TCAP stack.

OCSS7 as the Responder

In this configuration, the support system generates load, and is the initiator of the dialog. The test system responds to the dialog.

Response time is measured on the support system by the scenario simulator infrastructure, from immediately before submission of the TC-BEGIN to the TCAP stack, to immediately after receipt of the TC-CONTINUE containing the CAP Continue invoke from the TCAP stack.

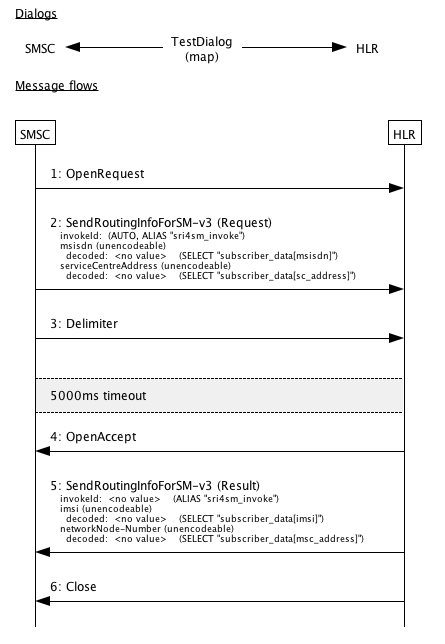

MAP SRI for SM scenario

These scenarios consist of dialogs following the message flow:

-

The initiator sends a TC-BEGIN initiating a MAP dialog, containing SEND-ROUTING- INFO-FOR-SM invoke

-

The responder immediately responds with a TC-END containing a SEND-ROUTING- INFO-FOR-SM result

OCSS7 as the Initiator

In this configuration, the test system generates load, and is the initiator of the dialog. The responder is the external support system.

Response time is measured on the test system by the scenario simulator infrastructure, from immediately before submission of the TC-BEGIN to the TCAP stack, to immediately after receipt of the TC-END from the TCAP stack.

OCSS7 as the Responder

In this configuration, the support system generates load, and is the initiator of the dialog. The test system responds to the dialog, sending a TC-END.

Response time is measured on the support system by the scenario simulator infrastructure, from immediately before submission of the TC-BEGIN to the TCAP stack, to immediately after receipt of the TC-END from the TCAP stack.

Test setup

Each test run consists of a 10 minute ramp-up period where load is increased from zero to the target rate; then a 30 minute measurement period at peak load; then a 2 minute drain period during which no new dialogs are initiated.

The ramp-up period is included as the Oracle JVM provides a Just In Time (JIT) compiler. The JIT compiler compiles Java bytecode to machinecode, and recompiles code on the fly to take advantage of optimizations not otherwise possible. This dynamic compilation and optimization process takes some time to complete. During the early stages of JIT compilation/optimization, the node cannot process full load. JVM garbage collection does not reach full efficiency until several major garbage collection cycles have completed.

Only latency measurements during the measurement period are used; latency measurements during the ramp-up period are ignored.

Load is not stopped between ramp up and starting the test timer.